AGI isn't coming ... soon

November 29, 2024

•

5 min read

It’s time we talk about the disconnect between what the major AI vendors are preaching and the reality of their technology. OpenAI, Anthropic, and others keep pushing the narrative that AGI (Artificial General Intelligence) is right around the corner. Sam Altman, OpenAI’s CEO, loves to tease the idea that AGI is almost here, even as key figures quietly exit the company. If they were genuinely so close to achieving AGI, would people really be leaving?

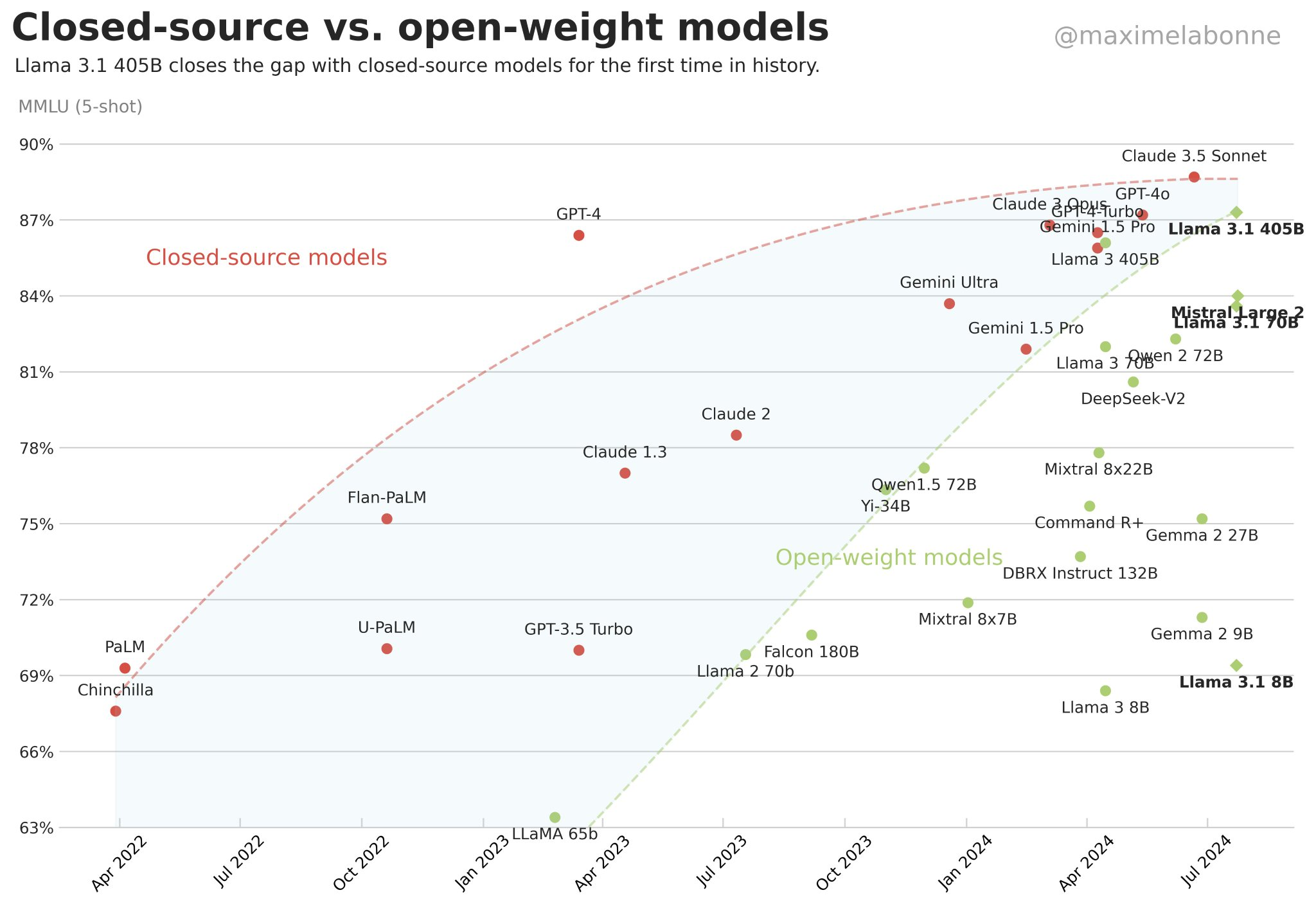

At the same time, their latest models don’t exactly scream “revolutionary.” o1 is essentially GPT-4 with a longer context window and a clever application of chain-of-thought prompting—a well-known technique that many people are currently using. It’s not a breakthrough; it’s an optimisation. This sounds the alarm for a larger issue: new models are plateauing in terms of effectiveness compared to their predecessors.

Image sourced from @maximelabonne

Rather than rolling out a costly and marginally better GPT-5, OpenAI seems to be doubling down on improving GPT-4 for diverse use cases. Meanwhile, Anthropic has been consistently releasing incrementally better models, but nothing that mirrors the leaps we saw from GPT-2 to GPT-3 or even GPT-3.5.

What This Means for the AI Ecosystem?

This slowdown doesn’t spell the end for AI innovation, but it does signal a shift in focus. Companies are no longer looking to create single purpose tools but rather specialised ones.

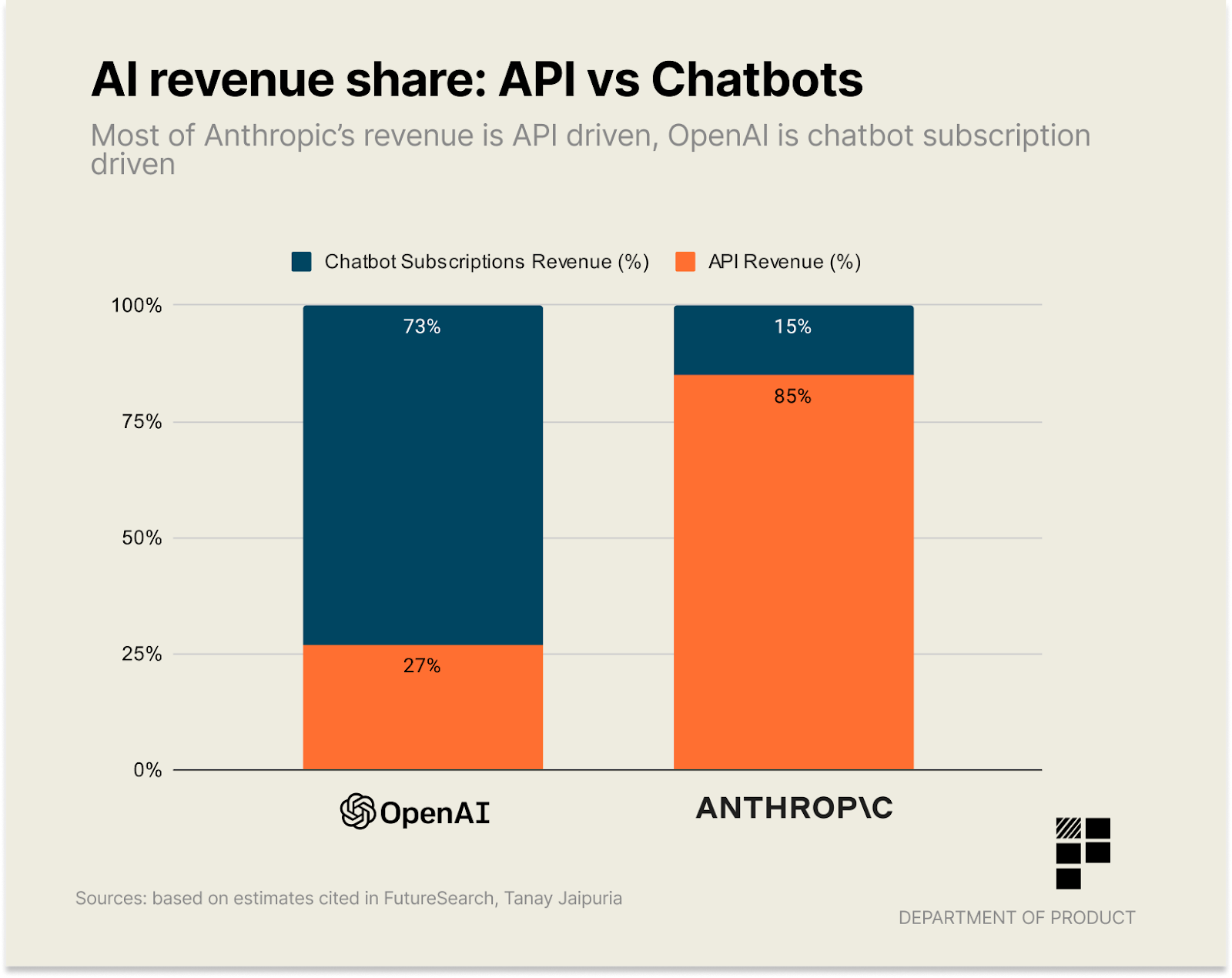

AI vendors are no longer competing solely on model performance. OpenAI, for example, has recognised that they no longer have the best models in the game. Anthropic often edges them out on raw performance, but OpenAI is excelling at building consumer products like ChatGPT. Over 75% of OpenAI’s revenue comes from ChatGPT, which is now getting better apps, native search integration, and an overall improved user experience.

Anthropic, on the other hand, invests heavily in their APIs, which account for 85% of their revenue. Their models are a few percentage points better, but their consumer-facing products lack polish. This leaves room for third parties to build superior applications on top of their foundations.

Image sourced from Department of Product

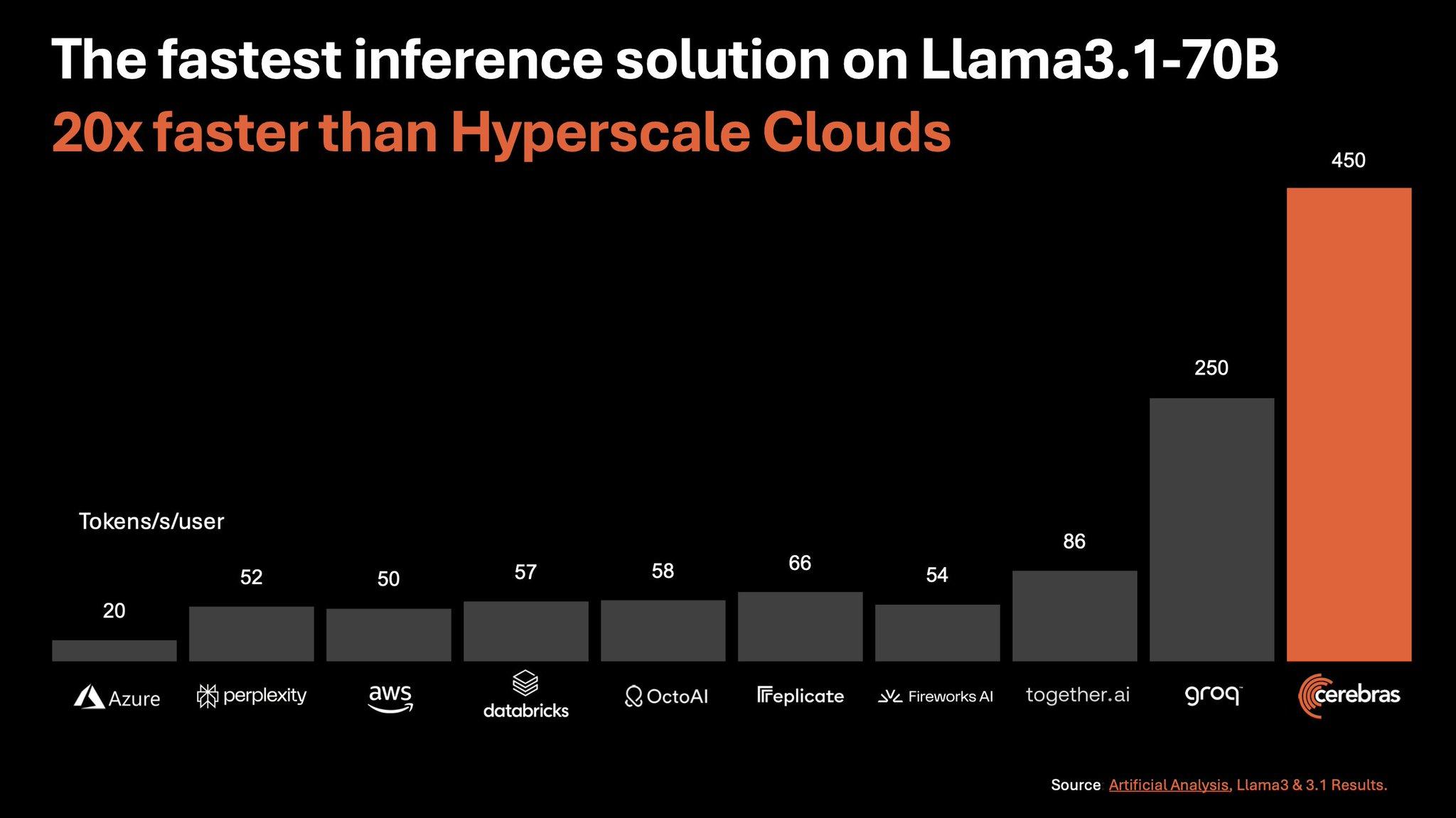

Specialised hardware for large language models (LLMs) is another exciting development. Companies like Cerebras and Graphcore are designing chips that perform inference three to five times faster than traditional GPUs while significantly reducing costs. This efficiency could unlock use cases previously deemed too slow or expensive.

Image sourced from @CerebrasSystems

We’re entering an era where AI products won’t necessarily be smarter but will be much faster and more affordable—key factors in scaling adoption, this gives more people access while being able to match the demand.

Better Optimisation, Not Smarter Models

AI development is starting to resemble the lifecycle of video game consoles. Early titles for the Xbox 360, like Perfect Dark Zero, were graphically decent but nowhere near the quality of later releases like Halo 4. The hardware didn’t change, but developers learned to optimise their code and tools to get the most out of it.

The same is happening with LLMs. Developers are finding smarter ways to use the models, maximising strengths and minimising weaknesses. Even without significant advances in model intelligence, products built on LLMs are improving dramatically.

The Agent Problem

This brings us to the elephant in the room: agents. There’s a lot of hype around AI agents automating complex tasks end-to-end, but the reality is far less glamorous. Even Anthropic’s Claude struggles with basic agent-like tasks, citing a success rate of just 14.9%.

Without near-perfect reliability, fully autonomous agents are more liability than innovation. A single error in a multi-step process compounds quickly, derailing the entire task. For now, human-in-the-loop systems are necessary to ensure functionality.

However, smaller, task-specific agents—“microagents”—are showing promise. For example, open-source projects are using AI to iterate on code until it passes tests, and tools like Builder.io’s AI turn Figma designs into working code using a series of interconnected smaller agents. These approaches offer increased reliability, speed, and accuracy over traditional, monolithic LLMs.

So then what is the Future of AI?

While the dream of AGI might still be far off, the AI space is still moving at an extreme rate. Faster hardware, better optimisation, and more practical applications are driving meaningful progress.

Expect more reliable tools for automating tedious tasks, from content creation to code generation, as vendors and developers continue to refine their approaches. But as the hype grows, remember to watch for what’s actually being used versus what’s just gathering buzz.

The road ahead is less about chasing an AGI pipe dream and more about delivering real value today—and that’s not such a bad thing.